The Anatomy of GloBI

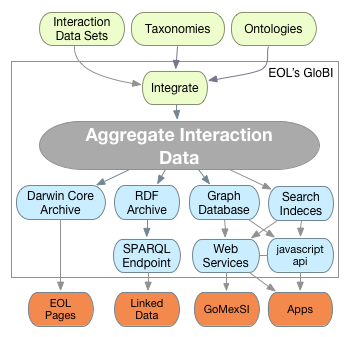

An anatomy of GloBI: Sources (datasets, taxonomies, ontologies) are aggregated into a single normalized metadata set. This dataset can be accessed in various ways to suit offline data analysis (Darwin Core), data integration (Linked Data), or interactive apps (JavaScript APIs or web services).

In the past year, we've written a bunch of software to normalize, aggregate, and expose various existing species-interaction datasets. To help understand the bits and pieces of the software that drives GloBI, I've included a system diagram in this post. You'll find the data sources on the top (ontologies/datasets), the normalization magic in the middle, and the exports or APIs on the bottom. Also, the current (known) users (e.g., EOL pages and GoMexSI) are included. If you have an interest in learning more or sharing ideas on any of these topics, I invite you to read our wiki, play around with the JavaScript API, download aggregate datasets, comment on this post, or create a GitHub issue. It is your input that helps us to build the right things at the right time. Past feedback has led us to make the improvements we're working on now. For instance, we are working on bettering the quality control of name mapping, providing examples for JavaScript APIs, and creating more mappings to existing ontologies, such as EnvO and Uberon, while extending our new interaction ontology.

Hoping to hear from you! Thank you for reading this post!